Alveo U200 Data Center Accelerator Card

Product Description

AMD Alveo™ U200 Data Center accelerator cards are designed to meet the constantly changing needs of the modern Data Center, providing up to 90X higher performance than CPUs for key workloads, including machine learning inference, video transcoding, and database search & analytics. Built on the AMD 16nm UltraScale™ architecture, Alveo accelerator cards are adaptable to changing acceleration requirements and algorithm standards, capable of accelerating any workload without changing hardware, and reducing overall cost of ownership.

Enabling Alveo accelerator cards is an ecosystem of AMD and partner applications for common Data Center workloads. For custom solutions, Application Developer Tool Suite (Vitis™ environment) and Machine Learning Suite from AMD provide the tools for developers to bring differentiated applications to market.

Alveo optional accessories extend the capabilities and access to Alveo data center acceleration cards. Accessories include power adapter cables and USB cables.

Buy AccessoriesKey Features & Benefits

Fast - Highest Performance

- Up to 90X higher performance than CPUs on key workloads at 1/3 the cost

- Over 3X higher inference throughput and 3X latency advantage over GPU-based solutions

Adaptable – Accelerate Any Workload

- Machine learning inference to video processing to any workload using the same accelerator card

- As workload algorithms evolve, use reconfigurable hardware to adapt faster than fixed-function accelerator card product cycles

Accessible - Cloud <-> On-Premises Mobility

- Deploy solutions on the cloud or on-premises interchangeably, scalable to application requirements

- Applications available for common workloads, or build your own with the application developer tool

For full product specifications refer to the Data sheet

| Card Specifications | U200 | |

|---|---|---|

| A-U200-P64G-PQ-G | A-U200-A64G-PQ-G | |

| Compute | ||

| INT8 TOPs (peak) | 18.6 | 18.6 |

| Dimensions | ||

| Height | Full | Full |

| Length | ¾ | Full |

| Width | Dual Slot | Dual Slot |

| Memory | ||

| Off-chip Memory Capacity | 64 GB | 64 GB |

| Off-chip Total Bandwidth | 77 GB/s | 77 GB/s |

| Internal SRAM Capacity | 35 MB | 35 MB |

| Internal SRAM Total Bandwidth | 31 TB/s | 31 TB/s |

| Interfaces | ||

| PCI Express | Gen3x16 | Gen3x16 |

| Network Interfaces | 2x QSFP28 (100GbE) | 2x QSFP28 (100GbE) |

| Logic Resources | ||

| Look-up Tables (LUTs) | 892,000 | 892,000 |

| Power and Thermal | ||

| Maximum Total Power | 225W | 225W |

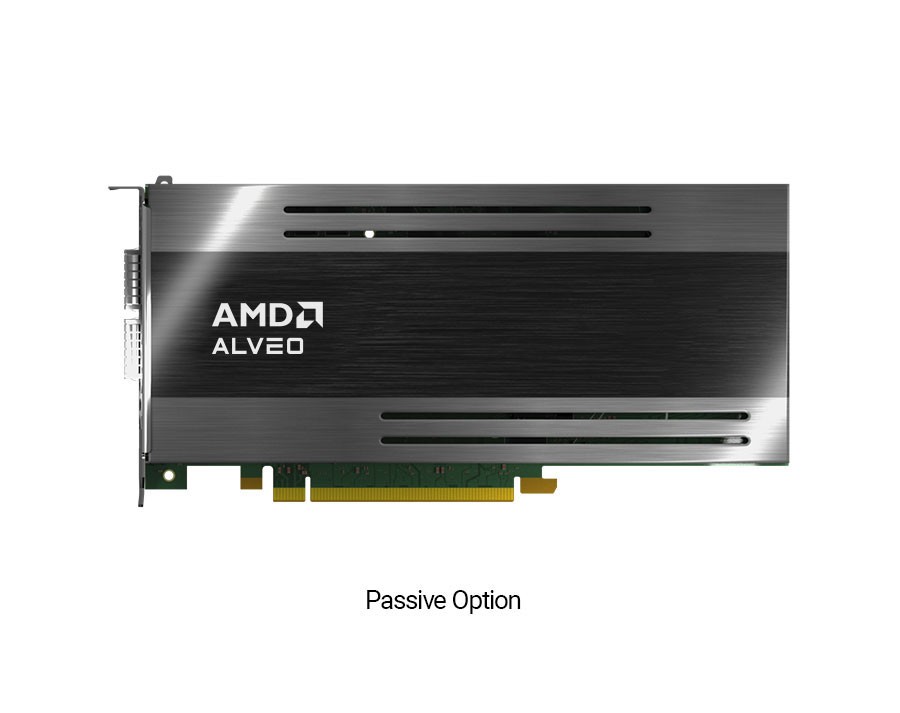

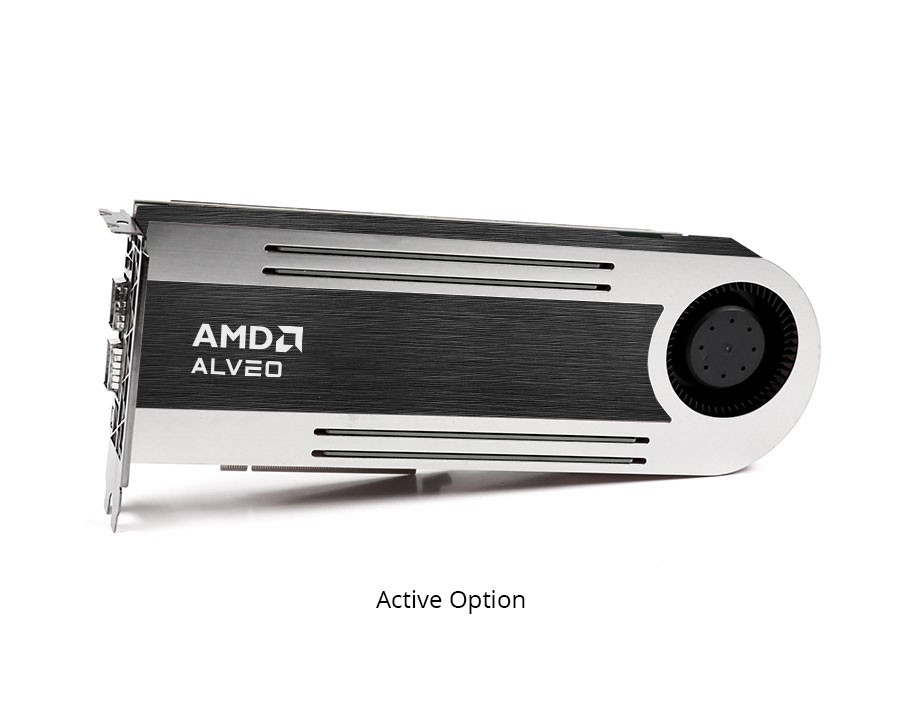

| Thermal Cooling | Passive | Active |

We’ve developed an ecosystem of AMD and partner solutions for most common workloads. Alveo Data Center accelerator cards can deliver dramatic acceleration across a broad set of applications and are reconfigurable to provide an ideal fit for the changing workloads of the modern data center. Compare how Alveo Data Center accelerator cards perform compared to traditional CPU architectures.

Alveo U200 Package File Downloads

The U200 Alveo Data Center accelerator card supports both Vivado design entry as well as a Vitis software platform. The Vivado flow is recommended for FPGA designers that want to use traditional design flows, such as RTL or HLx, while the Vitis software platform is recommended for SW developers. Select the Vitis or Vivado tab below for the steps to get started.

Select your options to obtain the matching download files

Note: See AR33838 for details on Tools support for new and legacy platforms.

Develop Your Own Alveo U200 Accelerated Applications in Vivado

For development using RTL and HLx, follow these steps:

Xbflash2 Utility

The xbflash utility can help to flash a custom image onto a given Alveo card. Please check the detailed user guide at: https://xilinx.github.io/XRT/master/html/xbflash2.html

| Description | Links |

|---|---|

| RHEL/CentOS 7.8, 7.9 | xrt_202210.2.13.466_7.8.2003-x86_64-xbflash2.rpm (zip) |

| RHEL 8.1, 8.2, 8.3, 8.4, 8.5 | xrt_202210.2.13.466_8.1.1911-x86_64-xbflash2.rpm (zip) |

| Ubuntu 18.04.4 LTS, 18.04.5 LTS | xrt_202210.2.13.466_18.04-amd64-xbflash2.deb |

| Ubuntu 20.04 LTS, 20.04.1 LTS, 20.04.2 LTS, 20.04.3 LTS | xrt_202210.2.13.466_20.04-amd64-xbflash2.deb |

Buy Online from AMD

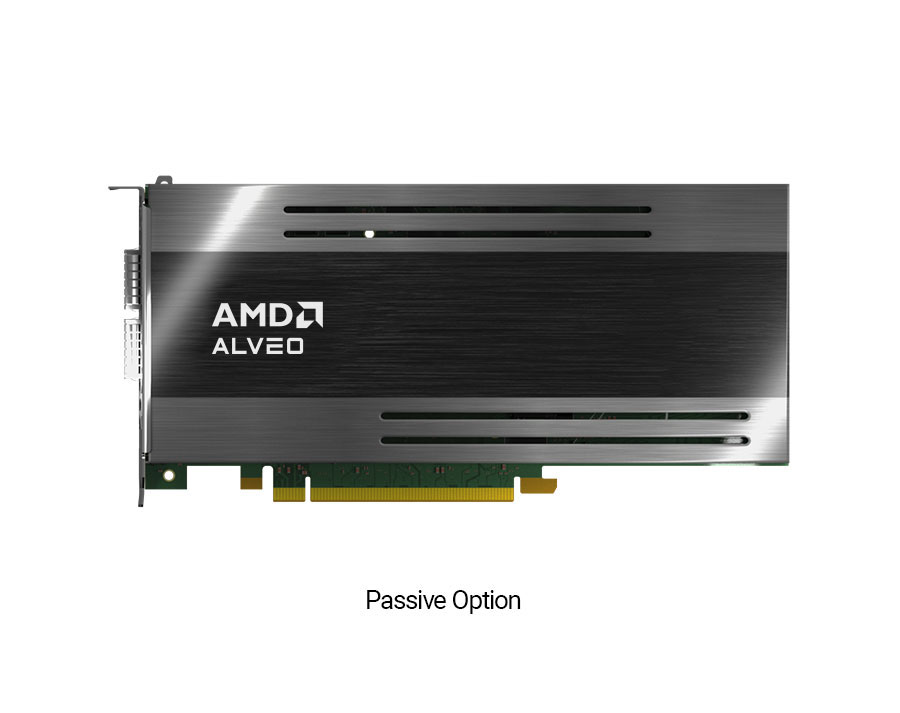

Alveo U200 (Passive)

$5,341 per board

Lead Time: 8 weeks*

(Huge backlog and supply constraint)

Part Number: A-U200-P64G-PQ-G

Alveo U200 (Active)

$5,341 per board

Lead Time: 2 weeks*

(Huge backlog and supply constraint)

Part Number: A-U200-A64G-PQ-G

* Lead time applies to quantities of 10 or less units. For more than 10, please contact Customer Service.