The Fifth Driver AI: Hardware-based accelerators for ADAS

Context

With a constantly growing population in a limited infrastructure, the role of the automobile in society is rapidly changing. In the future, the ownership of a vehicle will be replaced by ride-sharing since cars are expected to be fully automated. Nevertheless, the overpromised introduction of those self-driving capabilities has been constantly overdue due to the lack of enough computation power to accurately process vast amounts of data in real-time. This fact has made cars vulnerable to misbehaviors in real life scenarios, compromising the integrity of people onboard and generating doubts about the pertinence and integrity of this technology. Therefore, the rise and deployment of heterogeneous platforms applied to automotive are urgently required and in this article, we validate that Xilinx’s UltraScale+ Multiprocessor System-on-Chip (MPSoC) family is an attractive candidate to tackle those hard processing requirements either for vehicle networking and sensor data computing. By using a Zynq ZCU104 in conjunction with a camera, we capture video streams of a real traffic scene and process the datasets using hardware accelerators and Convolutional Neural Networks (CNN) within the Vitis AI development environment. With this setup, we found that it maximized the throughput in some orders of magnitude while reducing the latency up to X%. Finally, we provide different metrics regarding energy consumption and logic utilization of our implementation.

Introduction

Autonomous Driving is evolving in different trends to reach the ambition of electric, connected, driverless, and environmentally friendly mobility. Although all of those trends envision the next vehicle generations differently, they all converge to a common spot: highly intensive computing requirements. The introduction of wireless communication and sensing technologies are transforming cars from mechanical machinery to highly complex systems, which require efficient and accurate data management and computing in real-time. Processing multiple Gigabytes of data in milliseconds is a challenge that current von Neumann hardware architectures can not achieve either because the latency requirements can not be fulfilled or because trained machine learning models are not precise enough to react to the dynamics of traffic scenes.

Current implementations and trials of AD and Advanced Driver Assistance Systems (ADAS) are based on providing automobiles with surrounding view capabilities through high-resolution cameras and LiDAR and Radar sensors. The data gathered by those sensors must be fused to provide a detailed and trustworthy representation of the environment, which implies high synchronization between the processing units within the Electronic Control Units (ECU). It is noteworthy that the aforementioned synchronization is achieved by exploiting Time-sensitive Networking (TSN) through Ethernet and PCIE to connect almost neglectable-jitter devices. Once the data is acquired and fused, the automobile makes autonomous motion decisions according to machine learning models, which indicate to the car the displacement path. In this context, the self-driving capabilities configure a Cyber-physical Systems (CBS) where the actuators of the car respond in real-time to the real-world inputs gathered by sensing devices. Consequently, it is evident that the main computing requirements to make AD a reality are: ultra-low computing latency for in-vehicle networking and data processing; and high bandwidths to distribute big data payloads between ECUs. It is only possible to fulfill those demands through high performance and versatile hardware such as the ZCU104, in which the machine learning and communication functions can be parallelized on-the-fly yielding high throughput and reducing delay to its minimum. In comparison to other hardware architectures such as Graphics Processing Units (GPUs), we have seen that the UltraScale+ family consumes much less power, which is ideal in automotive since the range of the vehicles is constrained by the energy store within the batteries, in case of electric cars.

In this project, we focus our attention on the implementation of machine learning semantic segmentation and deep unimodal object detection algorithms, which allow the vehicle to recognize its surroundings. In this particular case, we are interested in evaluating if the implementation of the aforementioned algorithms can recognize pedestrians and other vehicles accurately. For this purpose, we exploit the built-in Video Codec Unit (VCU) of the Zynq board in conjunction with a satellite camera, and we use Vitis AI to obtain the metrics regarding latency, logic consumption, and throughput.

The Fifth Driver AI is a project with the ambition to be transformed into a company in the making, providing highly efficient hardware and software solutions for sensor fusion and the implementation of machine learning algorithms applied to autonomous driving in high performance heterogeneous hardware. In this initial phase, the FDAI is deploying this Proof-of-Concept (PoC) showing the immense benefits that those architecture bring to process vast amounts of data in real-time, ensuring reliability and safety. We do pretend to extend the findings here presented by deploying this software and its processing in a real fleet in an urban scene in diverse weather conditions.

Setup

We would like to immensely thank Andy Luo and Xilinx Inc. in California for providing us with the Zynq UltraScale+ MPSoC ZCU104 Evaluation Kit, in which we initiated the development of this application.

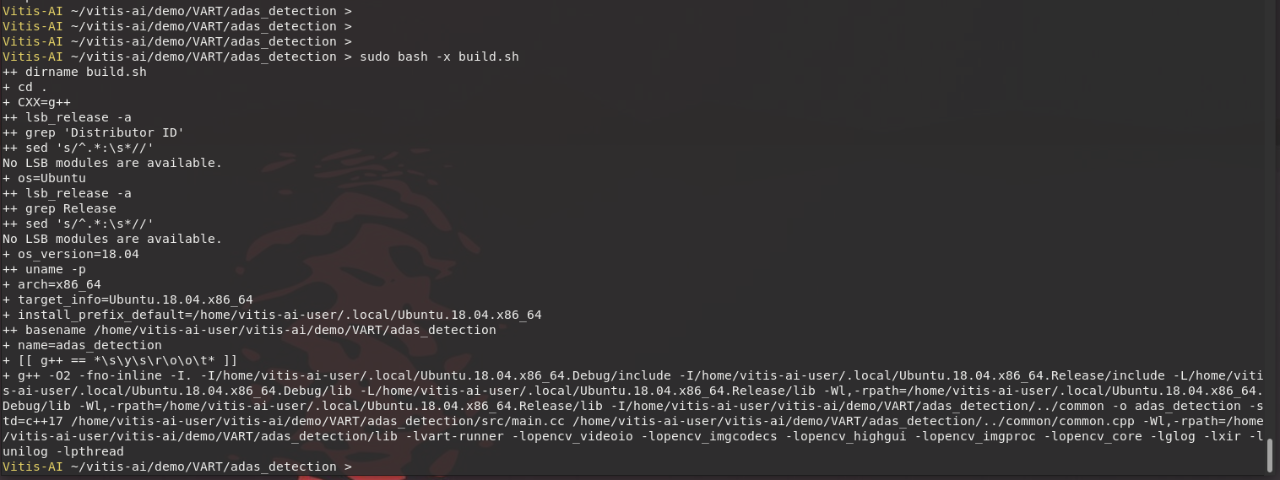

This kit is composed of the ZCU104 evaluation board, an ethernet cable to connect the board to the Raspberry Pis, a USB Type-C camera with 1080p resolution, a 4-Port USB 3.0 hub and a power supply cable, as depicted in the following pictures.

Here are the required steps to operate the board:

● Set the Boot Dip Switches.

● Connect the 12V power cable.

● Insert a flashed SD-Card with the PetaLinux operating system.

● Connect the Ethernet port.

● Turn on the board.

The quickstart guide shows how to set and run the basic board tests using an adequate Dip Switches configuration to check the board sequence. More detailed information about this procedure can be found on Xilinx documentation (https://pynq.readthedocs.io/en/latest/getting_started/zcu104_setup.html).

There are two mainstream software tools to program the ZCU104 board. The flashing of the board can take place through the SD-Card or JTAG interface. For this project, we tested both interfaces.

● PYNQ (stands for python productivity for Zynq)

● PetaLinux

PYNQ is a software framework that eases the programmability of Xilinx’s MPSoC by abstracting the programmable logic as hardware libraries, also known as overlays. Those hardware libraries are equivalent to conventional software libraries that can be trivially accessed through an Application Programming Interface (API). Therefore, the hardware can be programmed via PYNQ overlays without the need of vast logic circuits expertise. In our case, PYNQ provided us with a bootable Linux image, which contained Pynq Python packages and hardware libraries.

On the other hand, PetaLinux creates a customized embedded Linux image for each Xilinx SoC. This image includes the tools to use the hardware components to perform neural networks applications, and is mainly composed of the U-Boot, Linux kernel, and Root filesystem files. The official PetaLinux image with Vitis AI libraries and examples for the ZCU104 can be found here. More details about the image can be found here. For this project, PetaLinux was mainly employed to include the IP core of the VCU.

Raspberry Pi Setup

For this project, a Raspberry Pi and a PiCam as a satellite camera were employed. The power requirements of the ZCU104 did not allow us to power the device with batteries to capture a real life traffic scenario. Therefore, we use the Raspberry Pi for the image preprocessing and then the Zynq board to complete the computing of the object detection algorithms. That edge device was flashed with the Raspbian Lite image, now called Raspberry OS Lite. The camera is connected through the camera socket. The Raspberry Pi foundation provides detailed documentation about how to install the PiCam.

The satellite camera is used to capture video and expose it using GStreamer. The video is encoded in H264 since it is an efficient and popular video format, which is compatible with the ZCU104 VCU. We use the Real Time Streaming Protocol (RTSP) to expose the video in the ethernet network. Detailed instructions to set up a rtsp server in the raspberry pi can be found here and here.

```bash

pi@raspicam:~ $ nohup ./gst-rtsp-server-1.14.4/examples/test-launch --gst-debug=3 "( rpicamsrc bitrate=8000000 awb-mode=tungsten preview=false ! video/x-h264, width=640, height=480, framerate=30/1 ! h264parse ! rtph264pay name=pay0 pt=96 )" > nohup.out &

pi@raspicam:~ $ tail -f nohup.out

92:09:43.047083746 15112 0x70904c90 FIXME rtspmedia rtsp-media.c:2434:gst_rtsp_media_seek_full:<GstRTSPMedia@0x70938188> Handle going back to 0 for none live not seekable streams.

92:09:45.256081699 15112 0x70904c90 WARN rtspmedia rtsp-media.c:4143:gst_rtsp_media_set_state: media 0x70938188 was not prepared

118:11:29.069032950 15112 0x72d076f0 FIXME default gstutils.c:3981:gst_pad_create_stream_id_internal:<rpicamsrc12:src> Creating random stream-id, consider

implementing a deterministic way of creating a stream-id

118:11:29.390741337 15112 0x70904b50 FIXME rtspmedia rtsp-media.c:3835:gst_rtsp_media_suspend: suspend for dynamic pipelines needs fixing

118:11:29.405822032 15112 0x70904b50 FIXME rtspmedia rtsp-media.c:3835:gst_rtsp_media_suspend: suspend for dynamic pipelines needs fixing

118:11:29.405957865 15112 0x70904b50 WARN rtspmedia rtsp-media.c:3861:gst_rtsp_media_suspend: media 0x72d70158 was not prepared

118:11:29.493068865 15112 0x70904b50 FIXME rtspclient rtsp-client.c:1646:handle_play_request:<GstRTSPClient@0x7136b098> Add support for seek style (null)

118:11:29.493469437 15112 0x70904b50 FIXME rtspmedia rtsp-media.c:2434:gst_rtsp_media_seek_full:<GstRTSPMedia@0x72d70158> Handle going back to 0 for none live not seekable streams.

118:11:31.630440539 15112 0x70904b50 FIXME rtspmedia rtsp-media.c:3835:gst_rtsp_media_suspend: suspend for dynamic pipelines needs fixing

118:11:31.674769553 15112 0x70904b50 WARN rtspmedia rtsp-media.c:4143:gst_rtsp_media_set_state: media 0x72d70158 was not prepared

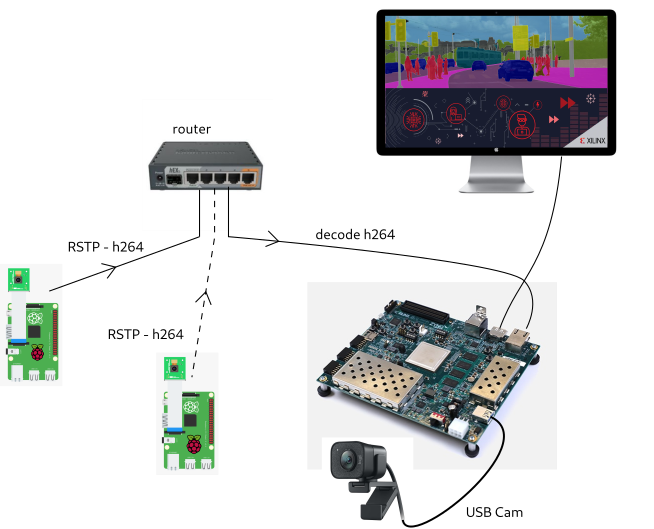

In the figure below is depicted the aforementioned setup that we used for this project. As it is depicted, the stream captured by the satellite camera is slightly preprocessed in that edge device and passes to the ZCU104 board through the ethernet interface. Therefore, the usage of a router is mandatory to redirect the payload from one side to the other. Once the data reaches the Zynq, then the video streams are decoded and processed using the Deep Learning Processing Unit, which is an IP Core used alongside Vitis to perform object detection and segmentation. The results of the processing can be visualized in a monitor via GStreamer, which must be modified in order to be used in Vitis.

Hardware

For this project, the hardware development took into consideration different tasks simultaneously. In order to benefit from the VCU and the Ethernet communication between the Raspberry Pis and the ZCU104 board, it is required to add those IP blocks through Vivado.

Ethernet and VCU

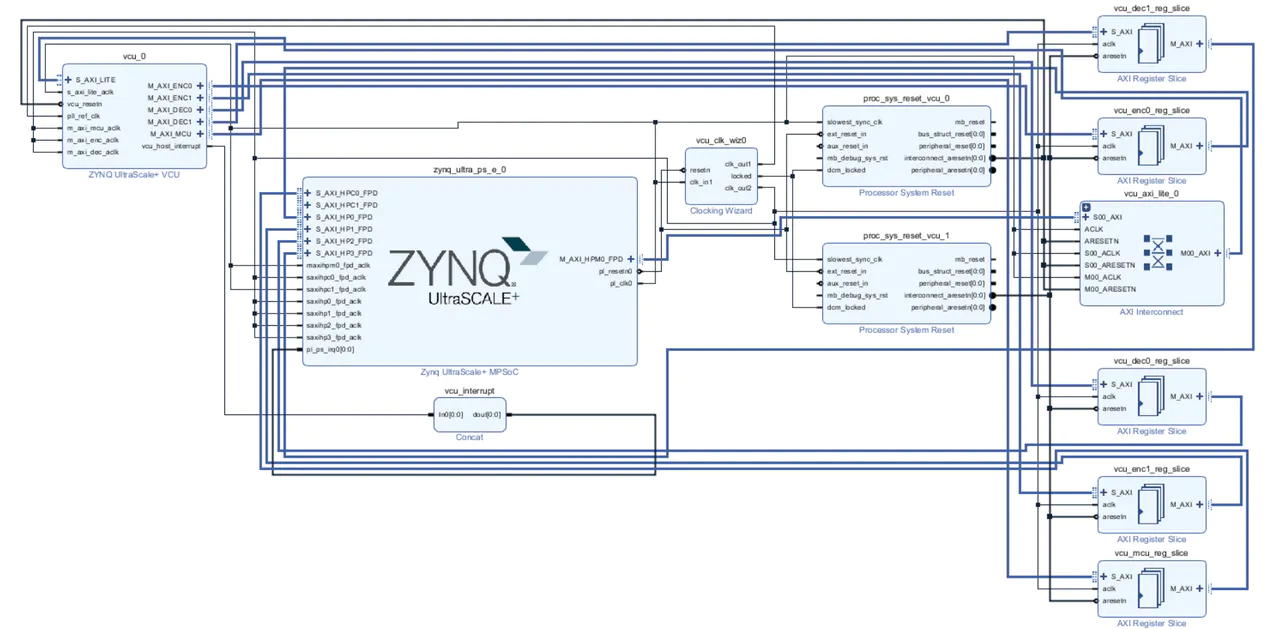

Since the ZCU104 does not include the VCU modules by default, it is necessary to include that IP core before generating the bitstream to flash the board.

Initially, from Vivado 2020.1 on, the VCU Core comes inside the IP block generation. In the following picture is depicted the Codec within the IP integrator for Vitis later development. Then, the HDL wrapper is created and finally, the XSA file is exported to either flash the board and to initiate a software domain in Vitis. This step can be not avoided directly by using Vitis since the Vitis’ compiler must detect the hardware modules to do the software implementation of the machine learning models.

At this point, it is really important to highlight the relevance of the VCU inside the ZCU104 for image processing. Normally, in semantic segmentation or merely object detection applications, the raw data is not received and transmitted as video, but as compressed video frames, each of them contains a huge number of pictures. The compression is important since it eases the storage and transfer of the frames from the snapping device to the processing unit. Nevertheless, its usage triggers design complexity because additional operations in hardware and software must be executed to get the data back in a convenient format for its manipulation. With the Codec inside the PL of the ZCU104, the designer can transmit and receive video streams by exploiting the on-chip UltraRAM, which enables embedding video partitions in the processing algorithm. Additionally, its flexibility permits it to encode and decode 4k video at a resolution of 60 frames per second. Through software using images stored in the built-in DDR memory of the PS, PL, or a combination of them.

In our case, the capturing camera is working at 1080p at 60 Hz resolution. Therefore, and by checking the documentation, the following memory interfaces and control interfaces must be enabled to generate the XSA file for the ZCU104.

● M_AXI_ENC0 & M_AXI_ENC1 — AXI4 encoding memory interfaces.

● M_AXI_DEC0 & M_AXI_DEC1 — AXI4 decoding memory interfaces.

● S_AXI_LITE — APU control interface for VCU configuration.

● M_AXI_MCU — MCUs and APU interfaces for communication with the VCU.

In the case of the Ethernet communication between the Raspberry Pi and the board, we employed the default connection cables.

Software

The software development of this project was composed of the image preprocessing within the Raspberry Pi and the implementation in Vitis of the unimodal machine learning object detection algorithm.

Programning Flow

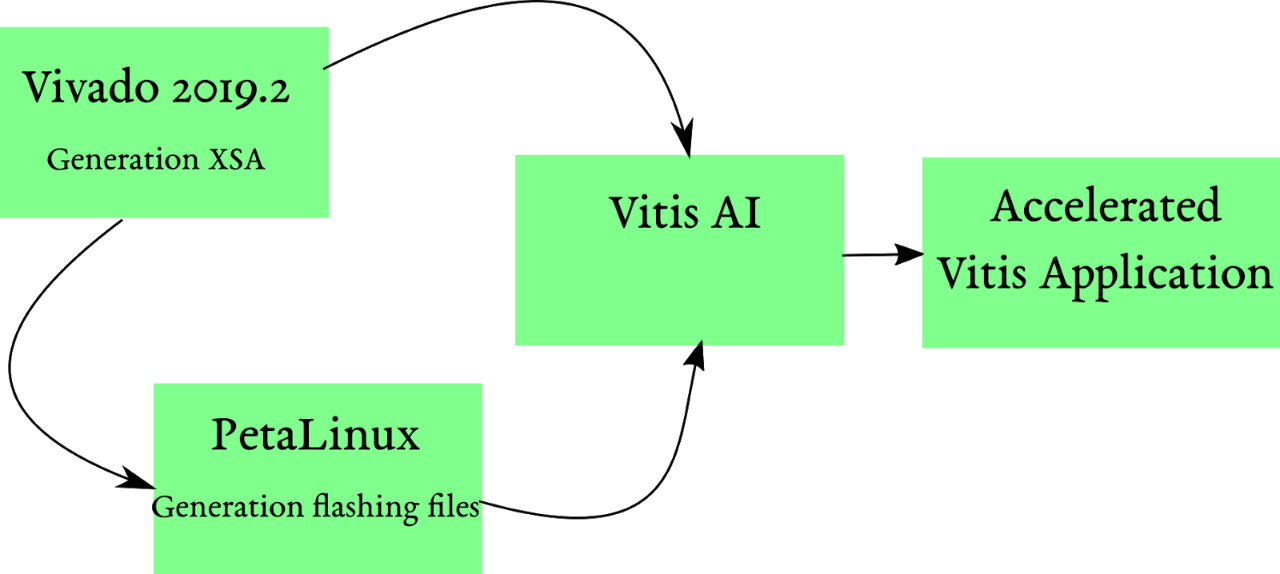

In order to develop accelerated machine learning applications within the ZCU104, it is important to understand the relationship between PetaLinux, Vivado, and Vitis AI. The embedded software acceleration flow starts with the exportation of the Hardware Description File (HDF) or the Xilinx Shell Architecture (XSA) from Vivado, which contains the board’s flashing program, and the Programmable Logic (PL) and Processing System (PS) configuration. The idea behind this process is to create a wrapper to generate a bitstream with the aforementioned settings. Once this process concludes, then it is possible to export the hardware file. With this file, PetaLinux will create a customized embedded Linux flashable version to manage the hardware resources within the ZCU104. The commands to create the PetaLinux project are the following:

```bash

$ petalinux-create -t project -s <path-to-bsp>

Where -t stands for type, which in this case is a project, -s stands for source, which refers to the location of the Board Support File (BSP). The BSP file for the ZCU can be downloaded directly from Xilinx’s PetaLinux website. It is really important to mention that the user must conserve consistency between the PetaLinux and BSP versions. For this project, we have employed version 2019.2 for either the PetaLinux and BSP in the ZCU102 and ZCU104 development kits.

After compilation, the hardware programming image can be generated through the build of the PetaLinux project using the following command

```bash

$ petalinux-build

A brief but robust detailed documentation about PetaLinux can be found here. In order to boot the image into the SD-Card, it is necessary to mount the SD card on the host programming computer and copy the BOOT.BIN and image.ub file to the SD card. Those files can be found on the petalinux’s building directory:

/petalinux-build-ZCU104-directory/pre-built/linux/images

The following graph has depicted the relationship between the development tools for this project. As depicted, once the ZCU104 has been flashed through the SD Card, then the development of the accelerated machine learning algorithm can start. The Vitis AI Integrated Development Environment (IDE) uses the hardware specification XSA file from Vivado, which will create the Domain environment where applications to be accelerated can be ported. The form in which Vitis was designed, by separating hardware and software, allows the user to develop and implement accelerated machine learning applications on different Xilinx platforms without major configuration changes. Vast documentation about Vitis AI can be found here.

Vitis AI Integration

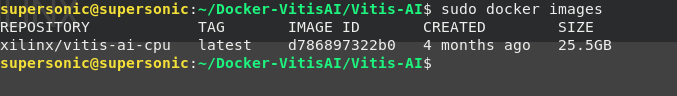

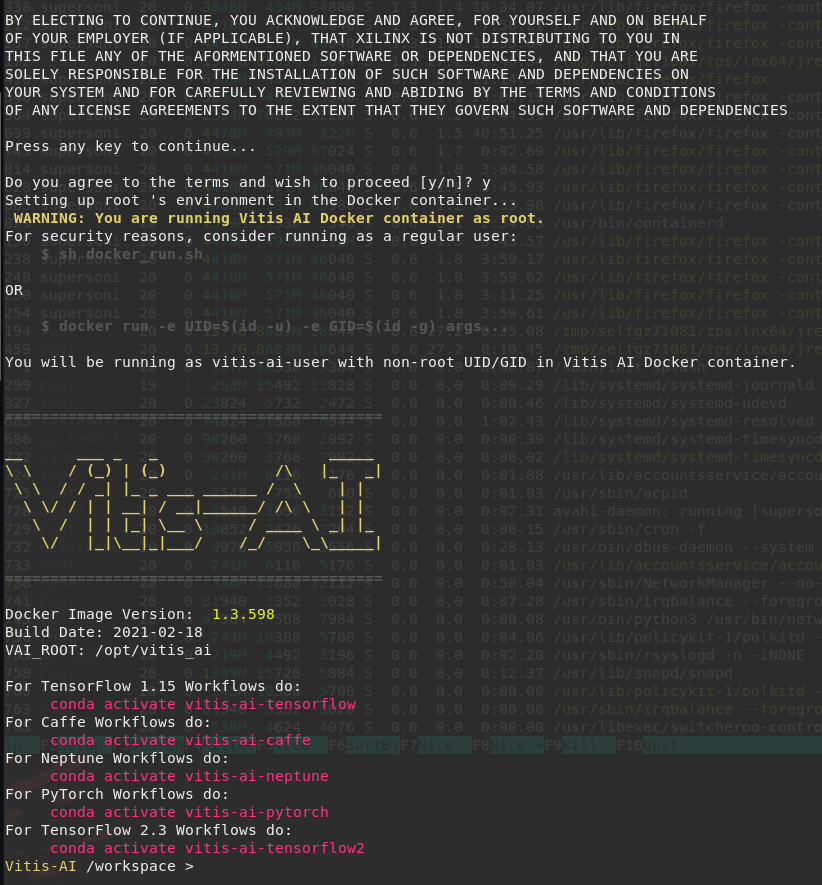

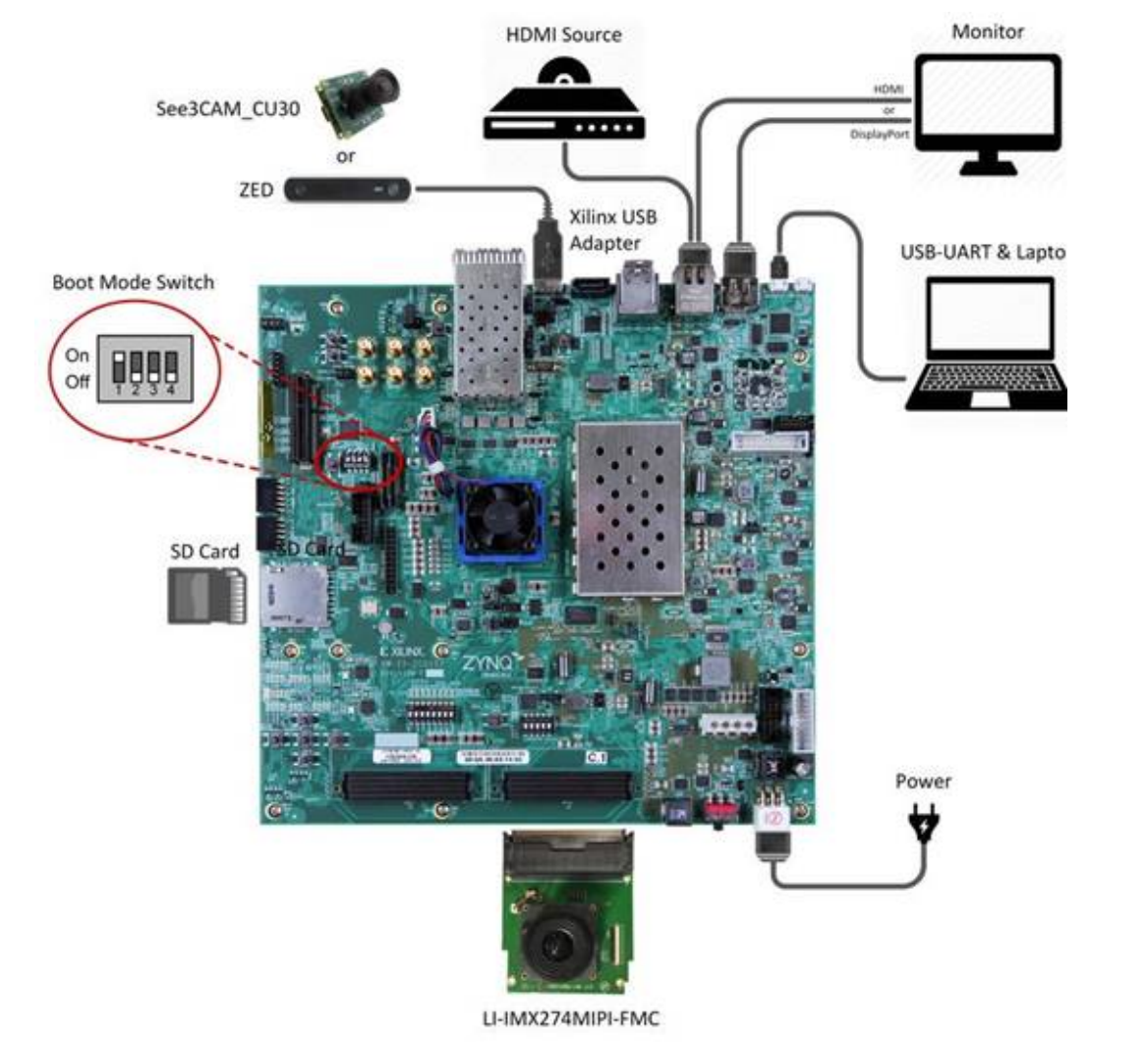

The instructions for installing VitisAI from the Dockers containers are provided in the following link. By building the pre-trained models within the Docker images, the user can create deep learning applications. Besides the installation with Docker, the user can also download the source files from Xilinx’s website and compile them to do the installation manually in a host computer. After a successful installation, the terminal should look like this:

This image contains the available machine learning frameworks, which are employed to train the models. Each framework counts with neural network pre-optimized models for object detection and classification, and image segmentation, among others. Based on those trained models, Vitis AI optimizes, compiles and quantizes before deploying them on the hardware kernels such as the Deep Learning Processing Units (DPU)s. The DPU are hardware accelerators that are later targeted on our edge device, the ZCU104.

The importance of Vitis AI lies in the optimization at the very low level before the implementation on the hardware. In order to exploit the DPUs, the quantizer has to transform the data structures of the trained models from float 32 bit to int 8 bit to be compliant with the input of the accelerators.

In our case, we must cross-compile our own source code using Xilinx VitisAI Runtime (VART). For setting up the host and target device, please follow the following tutorial.

Model Zoo

Vitis AI disposes of pretrained ready-to-use models for many of Xilinx boards. For this project, we selected the TensorFlow framework to train our object detection and classification model. They can be transformed using the script vai_q_tensorflow provided in Vitis Ai docker images.

```

ARCH=/opt/vitis_ai/compiler/arch/DPUCZDX8G/ZCU104/arch.json;

vai_c_caffe --prototxt yolov4_quantized/deploy.prototxt --caffemodel yolov4_quantized/deploy.caffemodel --arch ${ARCH} --output_dir yolov4_compiled/ --net_name dpu_yolov4_voc --options "{'mode':'normal','save_kernel':''}";

```

Once the model is exported in `.xmodel` format it can be transferred to the ZCU104 in order to be used in the inference phase.

Model integration

Xilinx provides a VART API which can be used in C++ or Python. For setting up the host and target device, please follow the following tutorial. This implementation can load the `.xmodel` and make a prediction using the exported model.

```

#include <glog/logging.h>

#include <iostream>

#include <memory>

#include <opencv2/core.hpp>

#include <opencv2/highgui.hpp>

#include <opencv2/imgproc.hpp>

#include <xilinx/ai/demo.hpp>

#include <xilinx/ai/yolov3.hpp>

#include <xilinx/ai/nnpp/yolov3.hpp>

#include "opencv2/opencv.hpp"

#include <memory>

#include <opencv2/core.hpp>

#include <vector>

#include <xilinx/ai/nnpp/yolov3.hpp>

#include <chrono>

using namespace std;

using namespace cv;

int main(int argc, char** argv)

{

const string classes[2] = { "Cars, LightTraffic" };

auto yolo = xilinx::ai::YOLOv3::create("ad-detection-network", false);

VideoCapture cap;

if (!cap.open(argv[1]))

return 0;

while (1) {

Mat img;

cap >> img;

if (img.empty())

return 0;

auto results = yolo->run(img);

for (auto& box : results.bboxes) {

int label = box.label;

float xmin = box.x * img.cols + 1;

float ymin = box.y * img.rows + 1;

float xmax = xmin + box.width * img.cols;

float ymax = ymin + box.height * img.rows;

if (xmin < 0.)

xmin = 1.;

if (ymin < 0.)

ymin = 1.;

if (xmax > img.cols)

xmax = img.cols;

if (ymax > img.rows)

ymax = img.rows;

float confidence = box.score;

if (label > -1) {

rectangle(img, Point(xmin, ymin), Point(xmax, ymax),

Scalar(200, 255, 255), 1, 1, 0);

putText(img, classes[label], Point(xmin, ymin - 10),

cv::FONT_HERSHEY_DUPLEX, 1.0, CV_RGB(118, 185, 0), 2);

}

}

imshow("frame", img);

waitKey(1);

return 0;

}

}

```

After the installation of the VitisAI libraries and setting up both the host and target devices, the result from the container image should be the one shown below. The upper image depicts the built VitisAI image from the host computer. The latter image presents the image for board flashing. It is noteworthy that the ZCU104 has different interfaces for communicating with the host computer. The preferred interface with this project is by establishing an SSH connection between the host and the board through the built-in port. Then, the VART files can be downloaded and compiled in the board directly to run the machine learning applications.

Additionally, the board can be flashed using the pre-built image provided by Xilinx. In order to complete the flash, it is first necessary to set up the boot mode switch as indicated in the following image. This way, the board recognizes either the hardware and software modules of this implementation

For more information about the deployment of machine learning algorithms, we highly recommend following the tutorials of TensorFlow about object detection and image segmentation. Depending on the processing requirements and preferences, we advise considering the tutorials in other deep learning frameworks such as Caffe, which can be found here.

Results

After deploying this setup into a real traffic scenario, we were able to identify different objects such as cars, humans, and traffic lights. The following picture presents a snapshot of the streams that we captured when we deployed our solution in the streets of Paris.

The object detection algorithm relied on the DNNDK version of Vitis AI, the code is available in the project repository. Vitis AI documentation provides useful examples in order to run different models from the AI model Zoo. For our use case, object detection using the Yolo v4 model has a latency of 75 ms for single-thread application, which corresponds to 13.3 FPS, which is not real-time but Xilinx provides several tools to improve performances such as model pruning, which can reduce model weight up to 70% without reducing model precision.

This optimization is available in the AI model Zoo for the Yolo V2 model, which has a latency of 11 ms, corresponding to 90 FPS. Therefore, this model is able to handle more than two image sources delivering real-time object detection.

The latency obtained for the model inference can be compared with other devices such as the Jetson Nano, where the inference of the model SSD Mobilenet v2 inference took 47ms, or the Raspberry Pi 3B, where the interference of the same model took 1.48 seconds. Meanwhile, the ZCU104 has a latency of 38ms for the same model in the baseline and it’s expected to be around 17ms for the pruned version of this model.

Conclusions

Even if driverless cars cause accidents in the future and even if they are still struggling in bad weather and bad illumination conditions, it is definitely clear that the deployment of autonomous driving will save the lives of many people around the globe. Recent studies have demonstrated that the main cause of the accidents is the drivers and not the machines themselves. In this project, we showed that many of the requirements to make AD a reality in the near future can be fulfilled by the usage of heterogeneous computing such as the Xilinx UltraScale+ family. In this context, although the massive deployment of the Zynq family within the ECU of the next vehicle generations is still under profound test and research, it is evident that it excels in providing ultra-low computing latency and high throughputs. This highly computational intensive use case can not be tackled using conventional hardware architectures such as neither x86 architectures nor hardware with high power consumption requirements like NVIDIA or AMD’s GPU devices.

We now would like to extend this project by using multi-modal object detection using different sensors and LiDAR in order to identify when and how sensor fusion must take place in a real-life transit scene.

References

About Javier

Javier Acevedo is a development engineer focused on the deployment of hardware accelerators and ASICs applied either for the next generation of wireless communication systems 5G and industrial testing testbed. In addition, he is pursuing a PhD in the field of hardware accelerators for the 5G Radio Access Network in Dresden.